If you’re a person who is into the Internet of Things, you’re probably pretty excited every time a new smart device comes out, especially smart home devices, as the thought that they could all work together so well is pretty exciting.

Google is looking to make it even more interesting with a new project that uses radar technology to make any home object “smart.”

Making “Dumb” Projects Smart

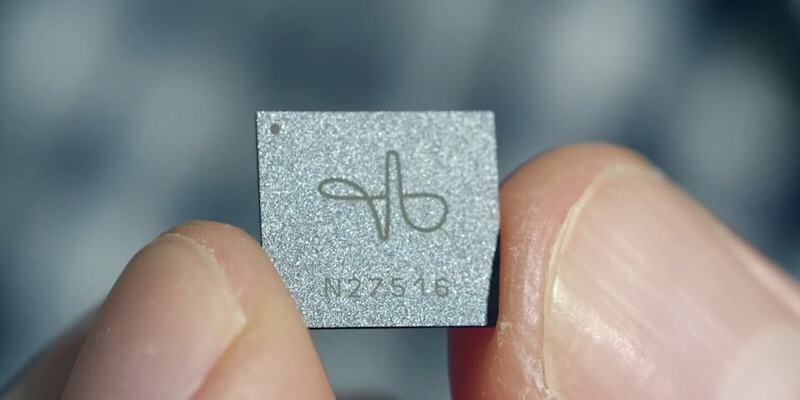

Radar-based computing has been around for quite some time, but just a few years ago, in 2015, Google’s Advanced Technology and Projects group debuted the Soli project, tiny radar-based sensors that make it possible to control gadgets by tapping your fingers together, .

Universities around the world are now exploring this very same radar technology. In particular, the University of St. Andrews in Scotland has picked up the Soli project.

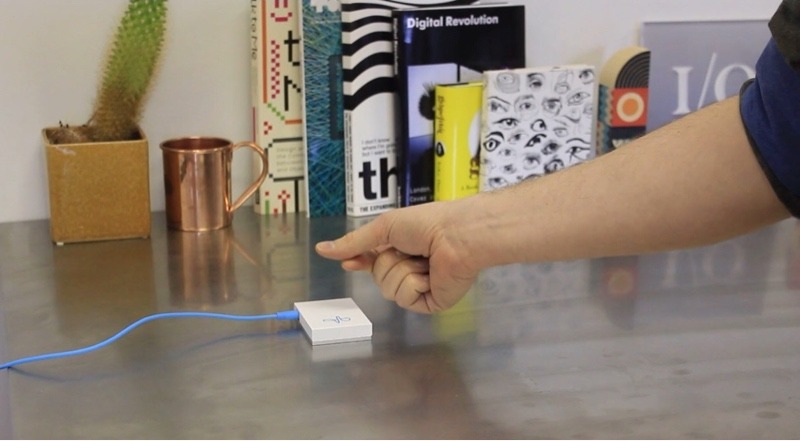

The university’s computer scientists have shown how the Google’s Soli sensor could be used to detect interactions with objects, such as measuring and counting. This could include measuring the sand in an hourglass, counting sheets of paper, counting stacked poker chips, or identifying the shape of objects built with Legos. It would do all this with radar signals yet without any recognition of images.

While it’s obvious how these applications could be used in businesses, the university is exploring how it could be used in smart homes.

“Placing computation into every element in the home might not be the most scalable or secure way going forward,” Digital Trends learned from Professor Aaron Quigley, the Chair of Human Computer Interaction in the School of Computer Science at the university.

“An approach that has one or two elements that can sense the interactions of lots of other household objects could be a more suitable approach.”

Using this technology, you wouldn’t need to keep buying a bunch of new smart devices to allow things to work together, according to the professor.

“Soli interaction makes us realize that we don’t need to interact with computers that look anything like the computers we’ve interacted with in the past,” continued Quigley.

“We can interact with computers just using day-to-day objects. If I had this kind of sensor in, for instance, a regular kettle and a cup, it’s possible to detect them,” he explained. “You can interact with them, and the computer will understand what you’re doing. Suddenly, every physical object in your home becomes a way to communicate with your computer.”

What would happen in this case is that these objects around your home would be turned into “tokens.” There would be no learning curve, as you would just work with the objects as you regularly do, and the computer would figure out the rest.

To help this technology along, a U.S. Federal Communications Commission waiver is allowing Project Soli sensors to use frequencies between 57 and 64 Ghz, which is higher than what is normally used. This should lead to a greater number of applications.

Furthermore

“This is why I love working in a university,” stated Quigley. “Students come in with none of the preconceptions that so many of us have. They have expectations that technology can be so much better. They’re unsurprised by what’s possible.

“Their approach is, ‘Why shouldn’t I be able to wave my hand in front of a dishwasher to start and stop it?’ Or ‘Why shouldn’t the washing machine recognize the contents of the clothes pile you’re carrying?’ You wouldn’t have to program in all of these things because the machine will just recognize them. This isn’t far away. It’s coming sooner than we think.”

It certainly does open up a world of possibilities, and exciting ones at that. The thought that we could control all of the things in our home without having to buy new ones is a bit mind-blowing and a certain game-changer.

What do you think about controlling everyday objects in your home with Google’s radar technology? Leave your thoughts in the comments below.

Image Credit: Photo and Videos on Digital Trends