The Internet of Things is one of those rare buzzwords that may actually live up to the hype, and Intel has the growth numbers to back it up.

Jonathan Ballon, general manager of Intel’s IoT group, in an interview with VentureBeat, argued that “we’re going from edge devices that were smart, meaning they had the ability to think, and now we’re moving to an era where those devices will be intelligent, meaning they have the ability to learn.”

More intelligence means more data, though, and given how data-heavy IoT already is, plus the ever-increasing number of sensor-packed devices like self-driving cars, it makes increasingly less sense to pack huge amounts of raw data off to central data centers for processing.

That’s why Ballon is confident that future IoT development, especially in enterprise, will be oriented towards using powerful new processors to do AI computing locally, at the device level. The more than fifty new products they rolled out at their San Francisco news event, like their second-gen Xeon processor (boasting thirty-five times the performance of the previous year’s model) are a tangible display of Intel’s optimism.

While the future tends to change quickly these days, it seems reasonable to assume that a lot of connected devices will be packing some serious computing power and doing a lot of local processing in the not-so-distant future.

What’s being computed on the edge?

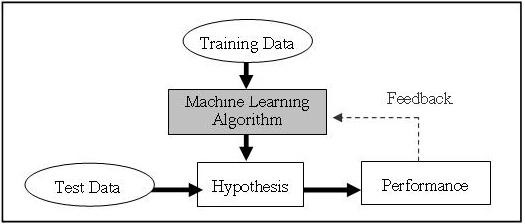

The rise of services like Google’s Stadia gaming platform makes it look like centralized clouds are still very much in vogue, but most IoT devices, especially on the enterprise level, don’t really require a lot of two-way data exchange, says Ballon. They tend to generate data based on direct input from the physical world, and they often have to react to it very quickly, making the relatively lengthy and resource-intensive round trip to the cloud and back a bit of an issue. Dealing with the data locally and then sending off a one-way burst of metadata is better for networks, data centers, and reaction times.

Ballon uses the example of a robot on an assembly line, which doesn’t really have the time to push raw input data to the cloud, wait for it to be processed, then re-download and implement the results. Ideally, it would get input from its computer vision or some other system, plug the data into a model and process it locally, complete its real-world reaction, and only then send some of the relevant metadata (a fraction of the size of the raw data) back to a cloud platform to help improve future models.

As the models are retrained and improved, they can be pushed out to other IoT devices, which can then improve their performance using data from every other machine connected to the network.

Self-driving cars, Ballon says, are a prime example of an edge-computing use case in a distributed system: they can’t afford any latency in their reaction times and can’t always depend on having the connectivity needed to compute in the cloud.

They’ll be able to talk to other cars and send data into the cloud to help improve the software that drives the car and report on traffic/weather conditions, but when they see something on the road ahead, they really need to be able to make their own decisions.

The future of number-crunching

Of course, running an AI isn’t exactly a cakewalk for the machines that are doing it, which is why a lot of consumer IoT is still based on relatively low-tech sensors. Cascade Lake, Intel’s new Second Generation Xeon Scalable, isn’t really something you’ll find in the average Alexa, but it’s clearly another step Intel is taking towards getting AI out of data centers and into devices.

In terms of raw performance, it’s quite a boost, and since, as Ballon mentions, Intel’s business has been growing significantly more than the market average, it’s likely that we’ll be seeing a lot more power coming down the pike over the next few years.

Get the best of IoT Tech Trends delivered right to your inbox!