One of the biggest issues with AI assistants is they require constant Internet connectivity, which isn’t always available. This is where the concept of edge NLP comes into play. It’s about overcoming connectivity challenges to provide most processing and features within the device itself, even without an Internet connection.

Understanding NLP

NLP, which stands for natural language processing, isn’t something new. In fact, it’s existed for decades. In 1954, IBM’s 701 computer successfully translated Russian sentences into English in just seconds. This is just one example of how early NLP was being used.

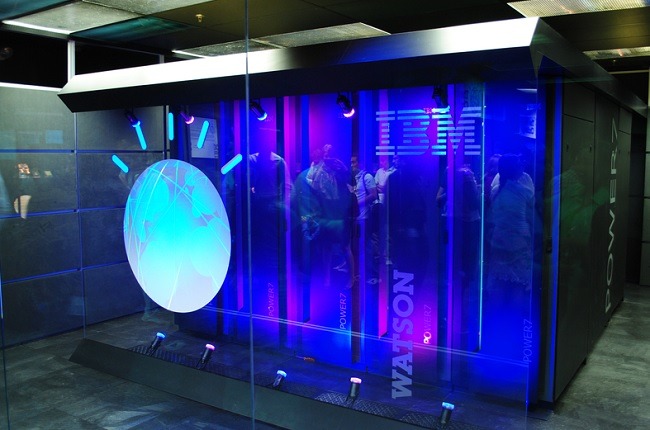

Obviously, advances in artificial intelligence have spurred how NLP is being used. Another example is IBM’s Watson, which seemed poised to be the future of AI in 2014 but somehow didn’t quite live up to its potential in some areas.

Today, you probably recognize NLP more for its role in virtual assistants. When you chat with Alexa, Siri, or Google Assistant, your words are processed remotely, and commands are sent back to the assistant to respond accordingly. That’s of course if you have an active Internet connection.

Using Edge NLP

Latency is a major issue when it comes to processing the growing number of requests. A slow connection may only take 10 seconds to process, but to the end user, it may as well be an hour. For applications requiring near real-time processing, such as translating conversations during a meeting or healthcare, latency isn’t an option.

This is where edge computing has made NLP faster and more useful overall. Edge computing is also used for a variety of IoT devices and applications. The concept is fairly simple. Instead of requests being sent hundreds or thousands of miles away to a central data server, requests are sent to edge networks, which may only be a dozen miles away. The shorter the distance data has to travel, the faster the response time.

Once again, you may notice that this all depends on a reliable connection. While some devices are able to store requests locally, they still won’t process them until there’s an active connection again.

Modern Edge NLP

To modernize edge NLP for less latency and even improved privacy and security, some devices are now using embedded NLP. This is the ultimate edge network. After all, if processing doesn’t have to go beyond the device, latency is all but eliminated.

KidSense is a great example. The company has created a speech recognition robot powered by Roybi. It can even process two different languages at one time if two children are trying to talk to each other.

But, unlike other types of similar robots, nothing’s stored in the cloud. Everything is processed locally. This requires a unique combination of advanced AI processing within the robot along with low power consumption to ensure the robot’s actually useful for more than just a few minutes.

Processing occurs in one of two ways. For complete offline access, embedded processing relies solely upon what’s stored on the device. However, there is an edge NLP, which KidSense calls Edge To Speech technology. In this model, the robot learns more about how to interact with a child based on data sent to it from local servers. This information helps the robot adapt and learn.

Since so much is already embedded, there’s less for the device to request from the cloud. And no data from your child is sent to remote servers. Data is only sent to the device to keep it more up to date. All processing and translating is still performed on the device itself.

Relying on Embedded AI

To increase the role of embedded AI, Expert.ai offers free tools to help developers add this functionality to their devices. Even when used with edge computing, embedded AI processes requests significantly faster. It also offers the advantage of still providing results even when a connection isn’t available.

Edge NLP isn’t just about edge computing anymore. It’s also about processing data locally, providing the optimal experience for end users and potentially increasing privacy and security. By incorporating this into AI assistants, they’ll be available nearly anywhere and anytime.

Image credit: Wikimedia Commons/Clockready, Roybi Media Kit

Get the best of IoT Tech Trends delivered right to your inbox!