Voice assistants are so helpful and useful in helping us do so many things, especially Alexa, which is picking up new skills all the time. It does more than just play music, answer questions, and tell you the weather. It can control your smart home devices and any other application that has an Alexa skill.

But the key word here is control. We need to be careful what skills we allow Alexa and just what we allow it to control. It can be used for a voice-command SQL injection attack to access your data in an app that it’s connected to, such as a bank.

Hacked via Alexa

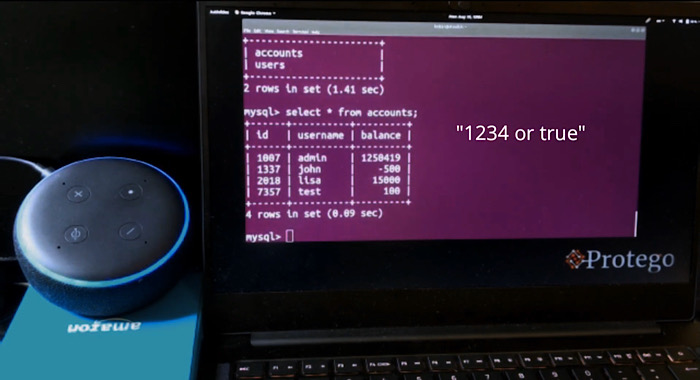

Protego provided this knowledge of how an attack could occur and supplied a video as well of this hack at work.

The bank account hack in the video is an example of how this hack could occur, but it doesn’t have to be Alexa and doesn’t have to be a bank account. Basically, any application that can connect to a voice assistant is vulnerable.

SQL is a language used to set up tables in a database on websites. The application developer may choose to share its information with a voice assistant, such as Alexa. The information would be shared, and this would allow Alexa to create a “skill” for that app.

If that app is not secure, that gives anyone the ability to use a voice assistant to access the data that is being provided through the developer.

Warning

It should be noted that this does not mean everyone who uses Alexa needs to worry about their bank account being hacked. My bank account is not connected to Alexa, or Siri for that matter. Someone could ask what my account balance is, but they wouldn’t receive an answer, as I have not provided such a thing to them and have not connected it.

However, it should also be noted that it should serve as a warning of what you do connect to your voice assistants. All data on your applications is not in danger. What is in danger is all data connected in an application that is not secure.

I’m pretty sure my bank has a secure app. Even still, I would never connect it to Alexa or Siri. I would also not connect it with any app that allowed it to ask sensitive questions, such as what my account balance is.

Does this voice-control SQL injection attack concern you? Are you reconsidering all your connected applications? Tell us how you feel about this news in the comments below.

Get the best of IoT Tech Trends delivered right to your inbox!